Nope, just any really so it looks good. :)

Trying to help in growing Lemmy because I’m not into self abuse.

I am still active and check my account periodically.

- 16 Posts

- 18 Comments

I work better at night.

Thanks for moving the content from Reddit. I did the same as well, for the important content. :)

4·1 year ago

4·1 year agoNo.

Maybe a weekly thread?

3·1 year ago

3·1 year agoThe first one. Readability is quicker, and you don’t have to stack context in your head if it’s ==.

Personally though, I prefer what I call short circuiting. Return right away if it’s null, basically input sanitization.

01·1 year ago

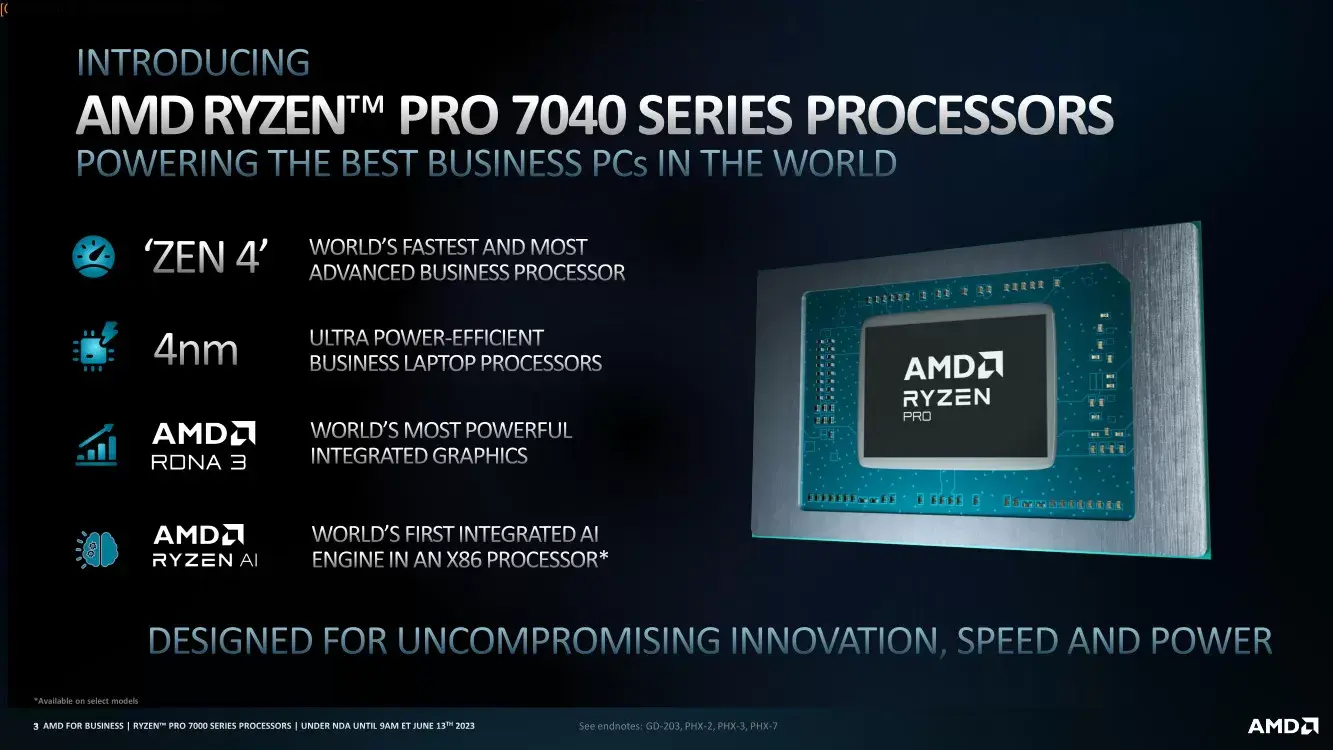

01·1 year agoWhile I understand the comparison between a 4070 and a 3080, I think that entirely ignores the cutting edge tech users.

Some people want the highest frames. Personally, I want 4K at 240hz. I play FPS, and I want nice quality too. That has yet to exist as a product, so we still have ways to go.

I don’t like DLSS, and frame generation. I did try it, and they had issues in Cyberpunk. The quality just wasn’t there.

The other thing is AI. There are companies built on Stable Diffusion. You purchase a time/result and in return you can generate images or text queries. Those are backed by GPUs at the end. I’ve tried Stable Diffusion locally on a 4090 and even then, it’s not fast.

As for Intel and AMD, I unfortunately have no hope. I hold a large amount of stocks in AMD and I really hope they get it right, but their software group isn’t at the cutting edge and are far behind. Their VR issues have been on going and mentioned during every update. When you mention that, the users get upset stating it’s good enough; it’s not. As for Intel, they have yet to get their head out of their ass. With Pat at the helm, I have no hope anytime soon. He’s still wearing the marketing hat, thinking they’re ahead.

🤷♂️

0·1 year ago

0·1 year agoYou should look at the code in Lemmy lol. I get that people think open source means more eyes, but it doesn’t mean higher quality.

1·1 year ago

1·1 year agoPrevious build: It was 4.5L. What makes it unique is the finickiness, because it was shutting off with stock settings and it uses a Dell 330W power brick. I had to down volt both the CPU and GPU, and it worked well for ~6 years.

Current build: It’s a 4090 and 7800x3D in a 10L case.

2·1 year ago

2·1 year agoIf it’s no longer usable to you, either sell or donate it. Simply because you don’t have a use for it anymore doesn’t mean you have to make a new use for it.

I’d love to know this too.

0·1 year ago

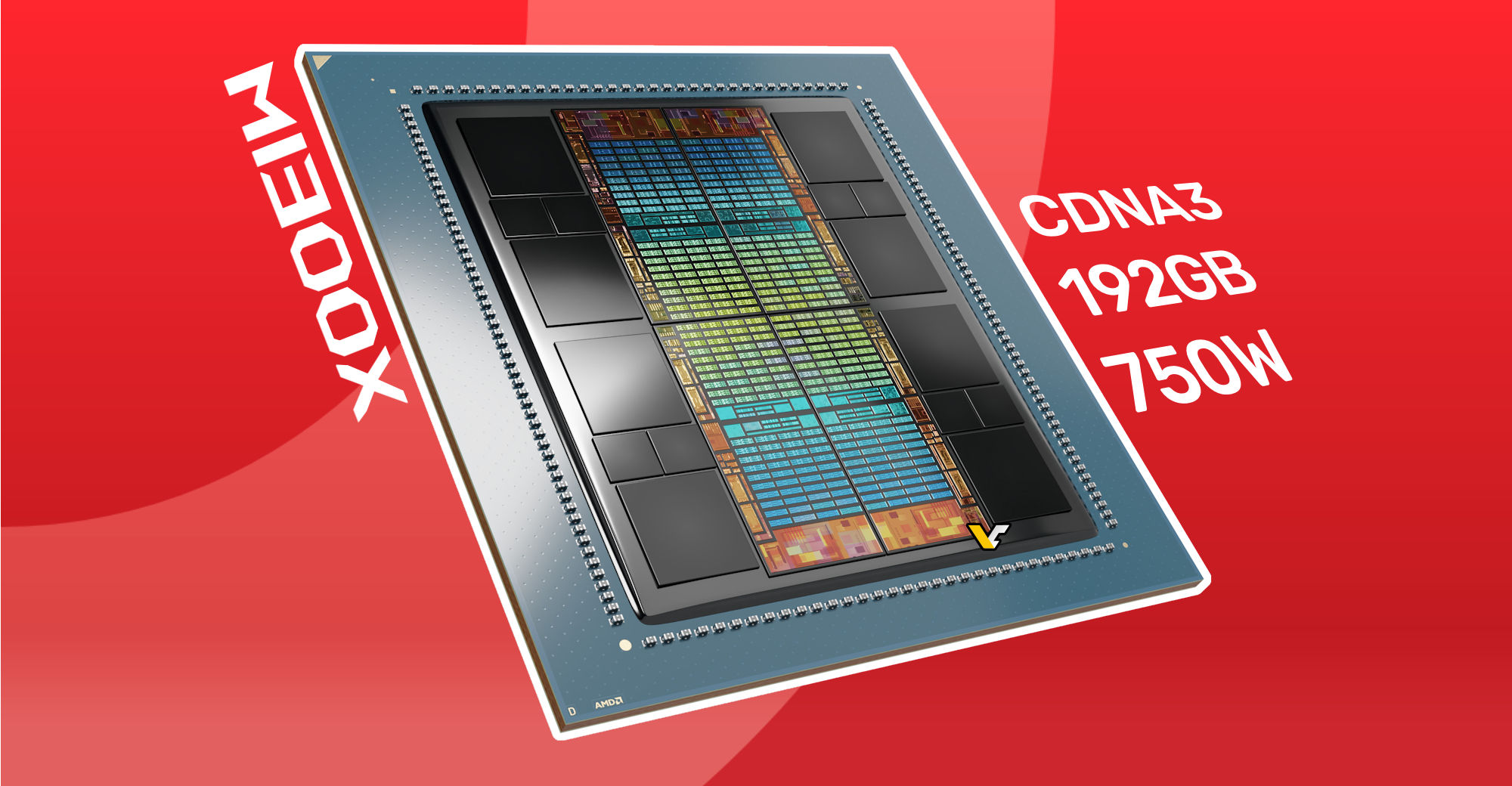

0·1 year ago750W is not a lot of power… It’s less than a microwave or a water heater.

0·1 year ago

0·1 year agoWhy? 750W of power can be had from an SFX Corsair PSU. I’m using that to power a 4090 and 7800x3D.

0·1 year ago

0·1 year agoIdk if that’s true though. Nvidias GPU sales are strong.

I’m a large AMD holder, but I purchased an Nvidia GPU because their offering doesn’t have as many bugs, they fix things quick, stability is excellent, and performance is efficient and good heat sinks.

Meanwhile AMDs offering has MANY bugs, every software release they continue to state they have a VR bug but don’t fix it, stability is all over the place, the cards are neither efficient nor the best, and there are so many heat sink paste issues it’s a joke at that price.

It’s one thing to be an AMD fan, but it’s another to ignore the issues they clearly have.

01·1 year ago

01·1 year agoThis article is from 3 years ago.

Looks great, thanks! :)